How to actually regulate AI [Thoughts]

How governments, policy makers, and regulators can actually step in to make a difference

Hey, it’s Devansh 👋👋

Thoughts is a series on AI Made Simple. In issues of Thoughts, I will share interesting developments/debates in AI, go over my first impressions, and their implications. These will not be as technically deep as my usual ML research/concept breakdowns. Ideas that stand out a lot will then be expanded into further breakdowns. These posts are meant to invite discussions and ideas, so don’t be shy about sharing what you think.

If you’d like to support my writing, consider becoming a premium subscriber to my sister publication Tech Made Simple to support my crippling chocolate milk addiction. Use the button below for a lifetime 50% discount (5 USD/month, or 50 USD/year).

AI is one of the most trending topics among businessmen, academics, and lawmakers in 2023. Because of its potential to bring an impact on a variety of critical problems- disease detection, agriculture, law, etc- AI has generated a lot of buzz. The spotlight has caused increased regulator interest, as governments want to ensure that society can benefit from the technology while limiting the downside.

An AI Index analysis of the legislative records of 127 countries shows that the number of bills containing “artificial intelligence” that were passed into law grew from just 1 in 2016 to 37 in 2022. An analysis of the parliamentary records on AI in 81 countries likewise shows that mentions of AI in global legislative proceedings have increased nearly 6.5 times since 2016.

However, this has been particularly difficult. Many of the proposed AI Regulations have been toothless, ineffective, and misguided. In this article, I will be going over the following ideas-

Why a Strong Government Presence is a must in AI.

The Current Challenges around AI Regulation.

How Governments can improve AI Safety and Compliance.

Shoutout to Sacha F for this great idea. If you have any topic suggestions, drop me a line and we can discuss them.

PS- I’m currently around NYC. If any of you know about available rentals OR just want to meet up to talk shop, please reach out. Also, no AI Updates for this week. I was supposed to do this article yesterday and the updates today, but I spent yesterday playing AOE2 and Civ 5 and thus got delayed.

Why a strong government presence is a must

One of the most prevalent myths in society is that government presence stifles innovation and efficiency. When we look through history, we get a different story. Corruption and Beauracracy are big impediments to growth, but when functioning well, Governments have-

A much higher risk tolerance which allows them to invest in projects that private companies would be too scared to get into. They can also put money into unprofitable public works projects to benefit societies, something private companies can’t do (since they have to be profitable).

A longer time horizon. Companies are beholden to quarterly shareholder reviews, which can put a hamper on longer-term projects.

Money- Governments have the money to consistently fund experimental ideas without worrying about market fluctuations. This is a big plus that allows them to actually take projects to completion.

These are massive advantages. Peak Bell Labs, arguably the most innovative company to ever exist (9 Different Nobel Prizes have been awarded for work done at Bell Labs), had a similar structure to enable their world-changing work (I covered Bell Labs and what led to their success here).

Think about the Tech Industry. Computers, the Internet, and many other developments are only possible because governments had the risk appetite to invest in early iterations of these projects without going bust. Public investments into these projects are what enabled the discovery of the basics, which were then used as a foundation for the private sector. This is true even today. Companies like Google, Meta, and OpenAI have made great contributions to AI and Tech that must not be diminished. However, we have lionized these companies while ignoring the legwork done by millions of hours of work done by publicly funded PhDs and researchers that enable these innovations. A minor tangent- scientific journals charging insane prices to gatekeep research funded by public money is theft, and I will die on that hill.

We also need governments to check the power of companies. Left to themselves, corporations have a tendency towards monopolization, price-gouging, and exploitation, which are unfortunate side-effects of an economic system that demands growth every financial quarter. A strong government presence is a must for protecting public interests and countering the information asymmetry inherent in the markets.

The cryptocurrency scams are a clear example of what happens when unregulated markets become mainstream: institutional investors make money through hype, scammers engage in rug pulls, and regular folk get screwed. Critique the bureaucracy and red-tape all you want, strong government regulations provide security and stability to all of us.

This is as true for AI as it was for any other field. AI has the potential for misuse: whether it’s employee evaluation systems unfairly penalizing people with disabilities; credit evaluators lowering the scores of people because they belong to historically weaker groups; or medical AI disproportionately hurting black people.

In 2019, a bombshell study found that a clinical algorithm many hospitals were using to decide which patients need care was showing racial bias — Black patients had to be deemed much sicker than white patients to be recommended for the same care. This happened because the algorithm had been trained on past data on health care spending, which reflects a history in which Black patients had less to spend on their health care compared to white patients, due to longstanding wealth and income disparities.

Stronger policies will be a must in AI that is critical to people's lives as they will be another layer of protection against groups recklessly implementing solutions without accounting for downstream effects. Unfortunately, much of the conversation around AI Regulation is misguided, mostly useless, and even actively harmful. Let’s talk about why so many AI Regulations belong in the Disasters Hall of Fame, right alongside 2021-Barcelona and your last breakup.

Why AI Regulation is so difficult

There are a few challenges that impede effective AI Regulation. Firstly, there is a deluge of misinformation in AI. To resurrect a horse that we’ve beaten to death in our cult- there is a lot of money to be made in Sensationalizing AI. Fear is an effective marketing tool, and many of the people championing existential risks are people with a financial interest in doing so. Elon Musk, amongst the most prominent AI Alarmists, called for a pause on the training of LLMs, only to release Grok a few months later. I don’t want to keep repeating points. To those of you who want to read the arguments against AI Doomerism in more detail, please read the following articles-

Why the AI Pause is Misguided- Here I go over why risks from AI are not from superintelligent models, but in the misuse of models (even if they are trivial). I go over some prominent examples such as Gen AI being used in Targeted Misinformation Campaigns and unpack why there was much more to the situation than people claimed.

The lucrative business of lying to you about AI- A deeper look into how influencers and businesses were profiting from the hype around AI (both positive and negative hype).

Since I’ve covered these ideas many times, I have nothing more to add here. I will end this point by saying that one of the most consistent feedbacks I get from my readers is that they appreciate my writing because it’s a “counter to the hype”. This should be a clear indication of how noisy the discourse around AI is atm.

That’s it. It seems that there is a lot more direction and hints from humans than was detailed in the original system card or in subsequent media reports. There is also a decided lack of detail (we don’t know what the human prompts were) so it’s hard to evaluate even if GPT-4 “decided” on its own to “lie” to the Task Rabbit worker.

- The excellent article, “Did GPT-4 Hire And Then Lie To a Task Rabbit Worker to Solve a CAPTCHA?”, is a great analysis of how much of the discussion around AI and it’s capabilities are severely overplayed.

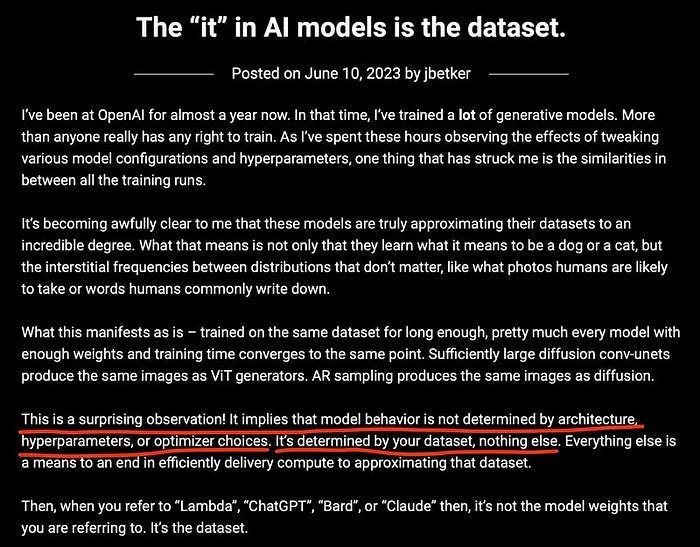

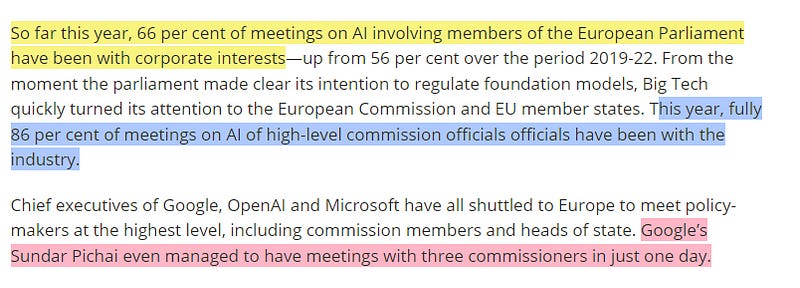

Sensationalism is compounded by another major issue- lobbying by powerful corporations. Companies like Meta, Amazon Microsoft, and Google spend 113 million euros per year on lobbying in Brussels. Recently, this has shifted towards AI. Google, Microsoft, and Amazon have all invested Billions into AI Companies, only to have Open Source eat their lunches. These companies have a vested interest in using government regulation as a moat to stop the growth of open-source competitors.

These companies focus on regulatory capture to slow down the development of Open-Source competitors by gating access to models and centralizing Safe AI into a few organizations. By singing Homeric Epics about the destructive potential of powerful models, they hope to make themselves the arbiters of safety. Top-down control would eliminate the biggest advantages of Open Source (nimbleness, diversity, and openness) by giving it the same structure as the big tech giants (without the funding).

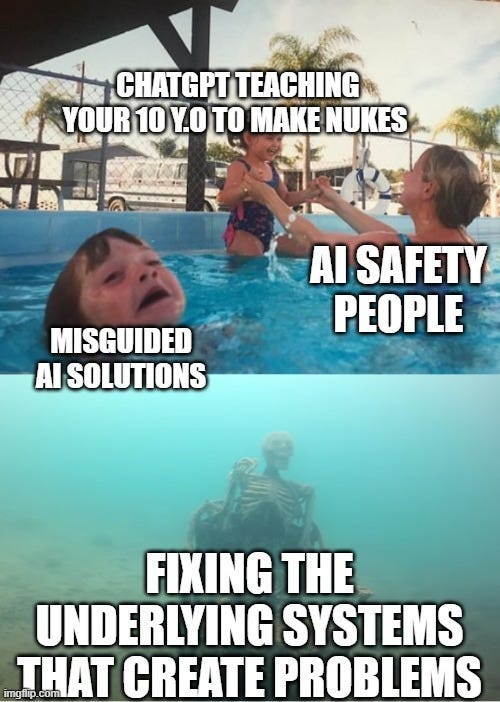

Unfortunately, both the Misinformation and Lobbying work very effectively because they’re simple and sexy. By pointing fingers at omnipotent models that will awaken to destroy society, they can make you forget about the real challenges with AI: the flawed systems that skew AI, our lack of understanding behind the decision-making of the models, and how fragile the systems we create are. By Quixotcally fighting the phantoms of SuperIntelligent AI, no one needs to worry about the more difficult (but banal challenges)- ensuring that we use AI only when appropriate, build useful tools, and that the AI we build doesn’t have dead code that costs us 450 Million dollars in 45 minutes.

When the New York Stock Exchange opened on the morning of 1st August 2012, Knight Capital Group’s newly updated high-speed algorithmic router incorrectly generated orders that flooded the market with trades. About 45 minutes and 400 million shares later, they succeeded in taking the system offline. When the dust settled, they had effectively lost over $10 million per minute.

AI Regulation is a mess because it’s sexier to busy ourselves protecting society from the rise of Terminator than it is to fix the problems created by our limitations. And it allows companies to keep operating as they have, without needing to change a thing.

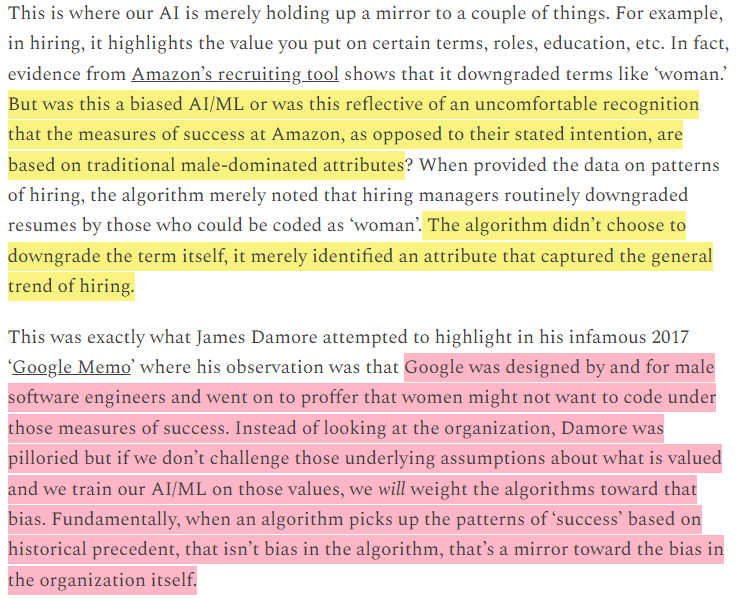

Take a look at the two passages written in the exceptional newsletter, Polymathic Being. Here Michael goes into how the Amazon AI being biased against women is an indication of a biased evaluation criteria at the company.

This is the problem with the regulation being floated. The AI we build is a reflection of our society and processes. Studying its flaws is a fantastic opportunity to understand the pressures and biases of our system. We can’t do so if we’re too busy letting holier-than-thou champions of Safety tell us what is and is not ‘safe’ or ‘appropriate’ to investigate/build upon. To really ensure that AI is safe, we need to stop looking down from our ivory towers and go deep into the blood and guts of our systems.

So how can this be accomplished? Let’s talk about that to finish up.

Why We Need AI Audits

To me, it is foolish to regulate the technology because it is contextual, everchanging, and often not the issue. Instead, it is more fruitful to regulate the industry and its processes.

Just as with the Financial Space, I believe that third-party audits are going to be a huge plus in evaluating AI Systems. This would require the following-

A well-defined checklist of evaluation criteria through which an AI system will be evaluated. The checklist would need to define multiple dimensions of AI Safety, along the entire pipeline (not just the model itself).

Experts to evaluate the pipelines.

LLMs and other data tools to evaluate large datasets for potential problems (I talked about how Prompt Engineering could be used here)

Having external experts come in ensures that they don’t have a bias towards the system design. Fidel Rodriguez, a senior Data Leader at LinkedIn, once told me about Gemba Walks and how they were critical to his team-

Imagine your team has built a feature. Before shipping it out, have a complete outsider ask you questions about it. They can ask you whatever they want. This will help you see the feature as an outsider would, which can be crucial in identifying improvements/hidden flaws.

-Read more about how Fidel gets peak performance from his Data Teams

The audits would accomplish something there.

Another benefit of these audits would be their specificity. Instead of building castles in the sky about abstract hypotheticals, the audits would study real products with real results. This creates better feedback loops, allowing us to iterate on the process to improve the details. These checks would also force concrete action from organizations and would ensure that people at least think before deploying AI systems.

Originally, these checks would be towards sensitive AI systems that have a major impact on people’s lives- Medical AI, Finance, Employee Assessments, AI used to create Public Policy, etc. We can extend the process as required.

People (including myself) have already been hired to evaluate the Safety of systems. These audits would only take this a step further, systematizing the process to start deliberately improving upon it. I’ve started some work on it. If any of you are interested in learning more/working with me, please reach out.

If you liked this article and wish to share it, please refer to the following guidelines.

That is it for this piece. I appreciate your time. As always, if you’re interested in working with me or checking out my other work, my links will be at the end of this email/post. And if you found value in this write-up, I would appreciate you sharing it with more people. It is word-of-mouth referrals like yours that help me grow.

Reach out to me

Use the links below to check out my other content, learn more about tutoring, reach out to me about projects, or just to say hi.

Small Snippets about Tech, AI and Machine Learning over here

AI Newsletter- https://artificialintelligencemadesimple.substack.com/

My grandma’s favorite Tech Newsletter- https://codinginterviewsmadesimple.substack.com/

Check out my other articles on Medium. : https://rb.gy/zn1aiu

My YouTube: https://rb.gy/88iwdd

Reach out to me on LinkedIn. Let’s connect: https://rb.gy/m5ok2y

My Instagram: https://rb.gy/gmvuy9

My Twitter: https://twitter.com/Machine01776819

I've always thought it comical that we're now getting into regulating AI when it's been controlling a lot more than the general public thinks for years already.

I do think the biggest issue in regulation is we really won't like to hold the mirror up to ourselves when we don't like the output of the algorithm. I fear the incination will be to 'shoot the messenger' that is AI instead of actually addressing the systemic issues that AI uncovers.